1. Prometheus概述

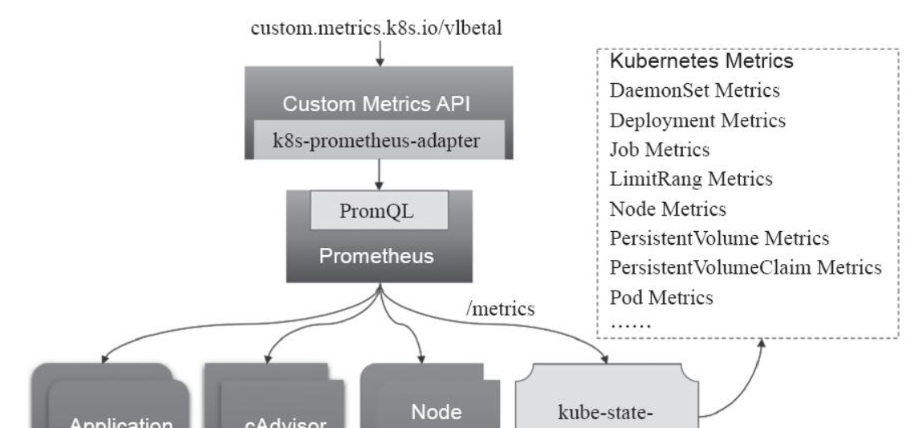

除了前面的资源指标(如CPU、内存)以外,用户或管理员需要了解更多的指标数据,比如Kubernetes指标、容器指标、节点资源指标以及应用程序指标等等。自定义指标API允许请求任意的指标,其指标API的实现要指定相应的后端监视系统。而Prometheus是第一个开发了相应适配器的监控系统。这个适用于Prometheus的Kubernetes Customm Metrics Adapter是属于Github上的k8s-prometheus-adapter项目提供的。其原理图如下:

要知道的是prometheus本身就是一监控系统,也分为server端和agent端,server端从被监控主机获取数据,而agent端需要部署一个node_exporter,主要用于数据采集和暴露节点的数据,那么 在获取Pod级别或者是mysql等多种应用的数据,也是需要部署相关的exporter。我们可以通过PromQL的方式对数据进行查询,但是由于本身prometheus属于第三方的 解决方案,原生的k8s系统并不能对Prometheus的自定义指标进行解析,就需要借助于k8s-prometheus-adapter将这些指标数据查询接口转换为标准的Kubernetes自定义指标。

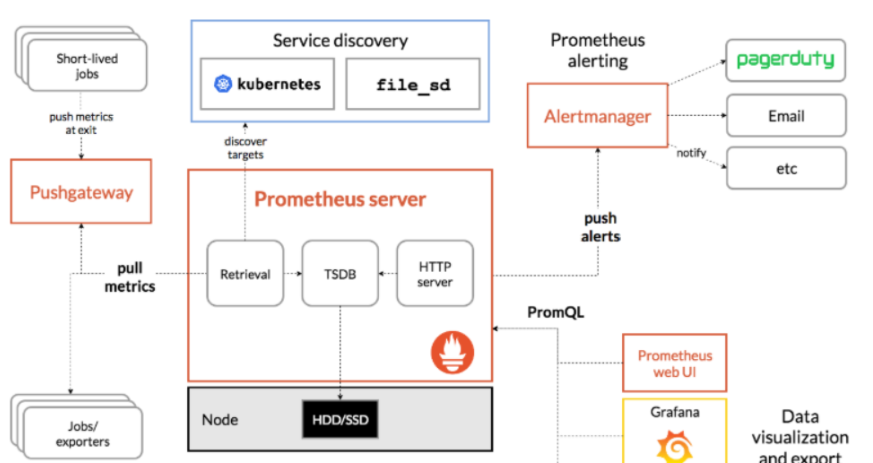

Prometheus是一个开源的服务监控系统和时序数据库,其提供了通用的数据模型和快捷数据采集、存储和查询接口。它的核心组件Prometheus服务器定期从静态配置的监控目标或者基于服务发现自动配置的目标中进行拉取数据,新拉取到啊的 数据大于配置的内存缓存区时,数据就会持久化到存储设备当中。Prometheus组件架构图如下:

如上图,每个被监控的主机都可以通过专用的exporter程序提供输出监控数据的接口,并等待Prometheus服务器周期性的进行数据抓取。如果存在告警规则,则抓取到数据之后会根据规则进行计算,满足告警条件则会生成告警,并发送到Alertmanager完成告警的汇总和分发。当被监控的目标有主动推送数据的需求时,可以以Pushgateway组件进行接收并临时存储数据,然后等待Prometheus服务器完成数据的采集。

任何被监控的目标都需要事先纳入到监控系统中才能进行时序数据采集、存储、告警和展示,监控目标可以通过配置信息以静态形式指定,也可以让Prometheus通过服务发现的机制进行动态管理。下面是组件的一些解析:

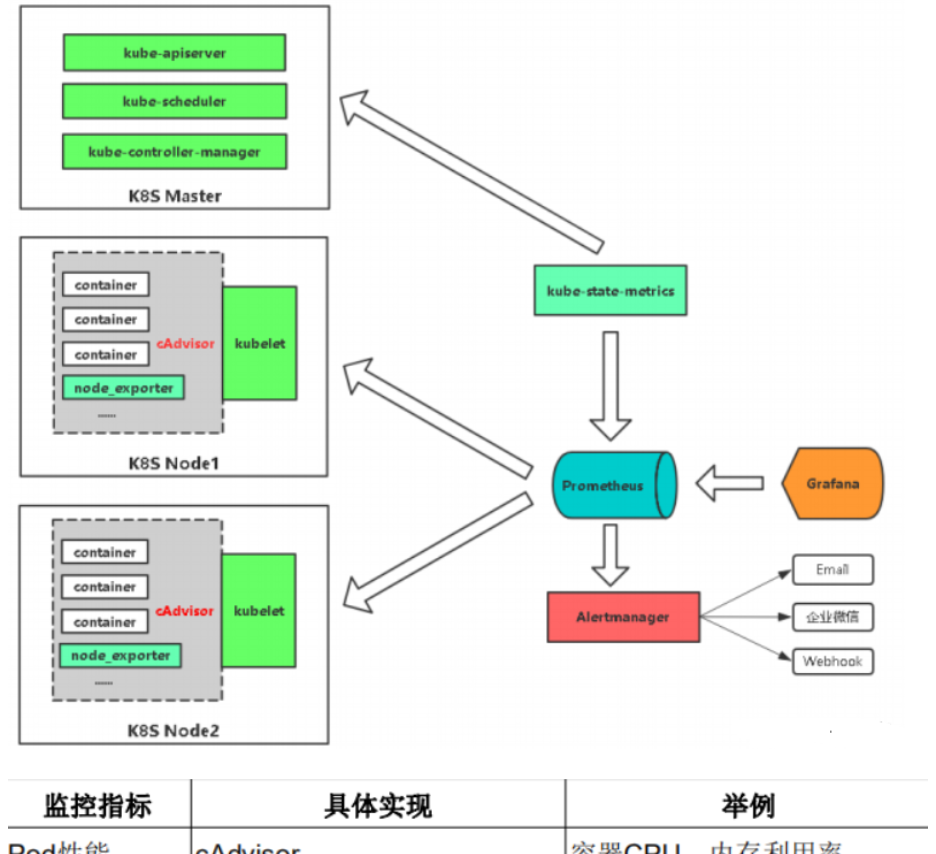

监控代理程序:如node_exporter:收集主机的指标数据,如平均负载、CPU、内存、磁盘、网络等等多个维度的指标数据。

kubelet(cAdvisor):收集容器指标数据,也是K8S的核心指标收集,每个容器的相关指标数据包括:CPU使用率、限额、文件系统读写限额、内存使用率和限额、网络报文发送、接收、丢弃速率等等。

API Server:收集API Server的性能指标数据,包括控制队列的性能、请求速率和延迟时长等等

etcd:收集etcd存储集群的相关指标数据

kube-state-metrics:该组件可以派生出k8s相关的多个指标数据,主要是资源类型相关的计数器和元数据信息,包括制定类型的对象总数、资源限额、容器状态以及Pod资源标签系列等。

Prometheus 能够 直接 把 Kubernetes API Server 作为 服务 发现 系统 使用 进而 动态 发现 和 监控 集群 中的 所有 可被 监控 的 对象。 这里 需要 特别 说明 的 是, Pod 资源 需要 添加 下列 注解 信息 才 能被 Prometheus 系统 自动 发现 并 抓取 其 内建 的 指标 数据。

prometheus. io/ scrape: 用于标识是否需要被采集 指标 数据, 布尔 型 值, true 或 false。

prometheus. io/ path: 抓取 指标 数据 时 使用 的 URL 路径, 一般 为/ metrics。

prometheus. io/ port: 抓取 指标 数据 时 使 用的 套 接 字 端口, 如 8080。

另外, 仅 期望 Prometheus 为 后端 生成 自定义 指标 时 仅 部署 Prometheus 服务器 即可, 它 甚至 也不 需要 数据 持久 功能。 但 若要 配置 完整 功能 的 监控 系统, 管理员 还需 要在 每个 主机 上 部署 node_ exporter、 按 需 部署 其他 特有 类型 的 exporter 以及 Alertmanager。

监控指标

集群监控

节点资源利用率

节点数

运行pods

…

pod监控

容器指标

应用程序

Prometheus监控K8S架构

2. 在K8S中部署Prometheus+Grafana

2.1 部署应用promentheus

2.1.1 创建prometheus

[root@kubernetes-master-001 ~]# mkdir -p /monitor/promethus && cd /monitor/promethus2.1.2 创建deployment文件

[root@kubernetes-master-001 ~/monitor/promethus]# vi promethus_deploy.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

name: prometheus-deployment

name: prometheus

namespace: kube-system

spec:

replicas: 1

selector:

matchLabels:

app: prometheus

template:

metadata:

labels:

app: prometheus

spec:

containers:

- image: prom/prometheus:v2.0.0

name: prometheus

command:

- "/bin/prometheus"

args:

- "--config.file=/etc/prometheus/prometheus.yml"

- "--storage.tsdb.path=/prometheus"

- "--storage.tsdb.retention=24h"

ports:

- containerPort: 9090

protocol: TCP

volumeMounts:

- mountPath: "/prometheus"

name: data

- mountPath: "/etc/prometheus"

name: config-volume

resources:

requests:

cpu: 100m

memory: 100Mi

limits:

cpu: 500m

memory: 2500Mi

serviceAccountName: prometheus

volumes:

- name: data

emptyDir: {}

- name: config-volume

configMap:

name: prometheus-config2.1.3 创建service文件

[root@kubernetes-master-001 ~/monitor/promethus]# vi promethus_svc.yaml

kind: Service

apiVersion: v1

metadata:

labels:

app: prometheus

name: prometheus

namespace: kube-system

spec:

type: NodePort

ports:

- port: 9090

targetPort: 9090

nodePort: 30003

selector:

app: prometheus2.1.4 创建configmap文件

[root@kubernetes-master-001 ~/monitor/promethus]# vi configmap.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: prometheus-config

namespace: kube-system

data:

prometheus.yml: |

global:

scrape_interval: 15s

evaluation_interval: 15s

scrape_configs:

- job_name: 'kubernetes-apiservers'

kubernetes_sd_configs:

- role: endpoints

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- source_labels: [__meta_kubernetes_namespace, __meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]

action: keep

regex: default;kubernetes;https

- job_name: 'kubernetes-nodes'

kubernetes_sd_configs:

- role: node

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- target_label: __address__

replacement: kubernetes.default.svc:443

- source_labels: [__meta_kubernetes_node_name]

regex: (.+)

target_label: __metrics_path__

replacement: /api/v1/nodes/${1}/proxy/metrics

- job_name: 'kubernetes-cadvisor'

kubernetes_sd_configs:

- role: node

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- target_label: __address__

replacement: kubernetes.default.svc:443

- source_labels: [__meta_kubernetes_node_name]

regex: (.+)

target_label: __metrics_path__

replacement: /api/v1/nodes/${1}/proxy/metrics/cadvisor

- job_name: 'kubernetes-service-endpoints'

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scheme]

action: replace

target_label: __scheme__

regex: (https?)

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_service_annotation_prometheus_io_port]

action: replace

target_label: __address__

regex: ([^:]+)(?::d+)?;(d+)

replacement: $1:$2

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: kubernetes_name

- job_name: 'kubernetes-services'

kubernetes_sd_configs:

- role: service

metrics_path: /probe

params:

module: [http_2xx]

relabel_configs:

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_probe]

action: keep

regex: true

- source_labels: [__address__]

target_label: __param_target

- target_label: __address__

replacement: blackbox-exporter.example.com:9115

- source_labels: [__param_target]

target_label: instance

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_service_name]

target_label: kubernetes_name

- job_name: 'kubernetes-ingresses'

kubernetes_sd_configs:

- role: ingress

relabel_configs:

- source_labels: [__meta_kubernetes_ingress_annotation_prometheus_io_probe]

action: keep

regex: true

- source_labels: [__meta_kubernetes_ingress_scheme,__address__,__meta_kubernetes_ingress_path]

regex: (.+);(.+);(.+)

replacement: ${1}://${2}${3}

target_label: __param_target

- target_label: __address__

replacement: blackbox-exporter.example.com:9115

- source_labels: [__param_target]

target_label: instance

- action: labelmap

regex: __meta_kubernetes_ingress_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_ingress_name]

target_label: kubernetes_name

- job_name: 'kubernetes-pods'

kubernetes_sd_configs:

- role: pod

relabel_configs:

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_pod_annotation_prometheus_io_port]

action: replace

regex: ([^:]+)(?::d+)?;(d+)

replacement: $1:$2

target_label: __address__

- action: labelmap

regex: __meta_kubernetes_pod_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: kubernetes_pod_name2.1.5 创建rabc文件

[root@kubernetes-master-001 ~/monitor/promethus]# vi rabc-setup.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: prometheus

rules:

- apiGroups: [""]

resources:

- nodes

- nodes/proxy

- services

- endpoints

- pods

verbs: ["get", "list", "watch"]

- apiGroups:

- extensions

resources:

- ingresses

verbs: ["get", "list", "watch"]

- nonResourceURLs: ["/metrics"]

verbs: ["get"]

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: prometheus

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: prometheus

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: prometheus

subjects:

- kind: ServiceAccount

name: prometheus

namespace: kube-system2.1.6 创建应用并验证

[root@kubernetes-master-001 ~/monitor/promethus]# kubectl create -f .

configmap/prometheus-config created

deployment.apps/prometheus created

service/prometheus created

clusterrole.rbac.authorization.k8s.io/prometheus created

serviceaccount/prometheus created

clusterrolebinding.rbac.authorization.k8s.io/prometheus created

[root@kubernetes-master-001 ~/monitor/promethus]# kubectl get pod -n kube-system

NAME READY STATUS RESTARTS AGE

prometheus-68546b8d9-9mgft 1/1 Running 0 3m30s

[root@kubernetes-master-001 ~/monitor/promethus]# kubectl get svc -n kube-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kube-dns ClusterIP 10.96.0.10 <none> 53/UDP,53/TCP,9153/TCP 17d

metrics-server ClusterIP 10.98.147.122 <none> 443/TCP 17h

prometheus NodePort 10.99.251.153 <none> 9090:30003/TCP 12m2.2 部署Node_exporter

2.2.1 编写yaml文件

[root@kubernetes-master-001 ~/monitor/promethus]# vi node_expoter.yaml

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: node-exporter

namespace: kube-system

labels:

k8s-app: node-exporter

spec:

selector:

matchLabels:

k8s-app: node-exporter

template:

metadata:

labels:

k8s-app: node-exporter

spec:

containers:

- image: prom/node-exporter

name: node-exporter

ports:

- containerPort: 9100

protocol: TCP

name: http

---

apiVersion: v1

kind: Service

metadata:

labels:

k8s-app: node-exporter

name: node-exporter

namespace: kube-system

spec:

ports:

- name: http

port: 9100

nodePort: 31672

protocol: TCP

type: NodePort

selector:

k8s-app: node-exporter2.2.2 部署node_exporter并验证

[root@kubernetes-master-001 ~/monitor/promethus]# kubectl create -f node_expoter.yaml

daemonset.apps/node-exporter created

service/node-exporter created

[root@kubernetes-master-001 ~/monitor/promethus]# kubectl get pod -n kube-system

NAME READY STATUS RESTARTS AGE

node-exporter-49fkx 1/1 Running 0 89s

node-exporter-6wdpp 1/1 Running 0 89s

prometheus-68546b8d9-9mgft 1/1 Running 0 24m

[root@master promethus]# kubectl get svc -n kube-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kube-dns ClusterIP 10.96.0.10 <none> 53/UDP,53/TCP,9153/TCP 4d21h

metrics-server ClusterIP 10.99.57.230 <none> 443/TCP 4d21h

node-exporter NodePort 10.98.54.77 <none> 9100:31672/TCP 32m

prometheus NodePort 10.99.244.91 <none> 9090:30003/TCP 5m15s2.3 部署Grafana

2.3.1 创建grafana目录

[root@kubernetes-master-001 ~/monitor/promethus]# cd ..

[root@kubernetes-master-001 ~/monitor]# mkdir grafana && cd grafana2.3.2 编写deployment文件

[root@kubernetes-master-001 ~/monitor/grafana]# vi grafana-deploy.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: grafana-core

namespace: kube-system

labels:

app: grafana

component: core

spec:

selector:

matchLabels:

app: grafana

replicas: 1

template:

metadata:

labels:

app: grafana

component: core

spec:

containers:

- image: grafana/grafana:4.2.0

name: grafana-core

imagePullPolicy: IfNotPresent

# env:

resources:

# keep request = limit to keep this container in guaranteed class

limits:

cpu: 100m

memory: 100Mi

requests:

cpu: 100m

memory: 100Mi

env:

# The following env variables set up basic auth twith the default admin user and admin password.

- name: GF_AUTH_BASIC_ENABLED

value: "true"

- name: GF_AUTH_ANONYMOUS_ENABLED

value: "false"

# - name: GF_AUTH_ANONYMOUS_ORG_ROLE

# value: Admin

# does not really work, because of template variables in exported dashboards:

# - name: GF_DASHBOARDS_JSON_ENABLED

# value: "true"

readinessProbe:

httpGet:

path: /login

port: 3000

# initialDelaySeconds: 30

# timeoutSeconds: 1

volumeMounts:

- name: grafana-persistent-storage

mountPath: /var

volumes:

- name: grafana-persistent-storage

emptyDir: {}2.3.3 编写service文件

[root@kubernetes-master-001 ~/monitor/grafana]# vi grafana-svc.yaml

apiVersion: v1

kind: Service

metadata:

name: grafana

namespace: kube-system

labels:

app: grafana

component: core

spec:

type: NodePort

ports:

- port: 3000

selector:

app: grafana

component: core2.3.4 编写ingress文件

[root@kubernetes-master-001 ~/monitor/grafana]# vi grafana-ing.yaml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: grafana

namespace: kube-system

annotations:

kubernetes.io/ingress.class: nginx

spec:

rules:

- host: grafana.test.mydomain.com

http:

paths:

- path: "/"

pathType: Prefix

backend:

service:

name: grafana

port:

number: 30002.3.5 部署应用并验证

[root@kubernetes-master-001 ~/monitor/grafana]# kubectl create -f .

deployment.apps/grafana-core created

ingress.networking.k8s.io/grafana created

service/grafana created

[root@kubernetes-master-001 ~/monitor/grafana]# kubectl get pod -n kube-system

NAME READY STATUS RESTARTS AGE

grafana-core-85587c9c49-945lv 1/1 Running 0 3m28s

metrics-server-599cdf68d4-cbngr 1/1 Running 0 18h

node-exporter-49fkx 1/1 Running 0 41m

node-exporter-6wdpp 1/1 Running 0 41m

prometheus-68546b8d9-9mgft 1/1 Running 0 64m

[root@kubernetes-master-001 ~/monitor/grafana]# kubectl get svc -n kube-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

grafana NodePort 10.111.128.51 <none> 3000:30369/TCP 3m42s

kube-dns ClusterIP 10.96.0.10 <none> 53/UDP,53/TCP,9153/TCP 17d

metrics-server ClusterIP 10.98.147.122 <none> 443/TCP 18h

node-exporter NodePort 10.107.177.177 <none> 9100:31672/TCP 41m

prometheus NodePort 10.99.251.153 <none> 9090:30003/TCP 64m2.4 浏览器验证部署

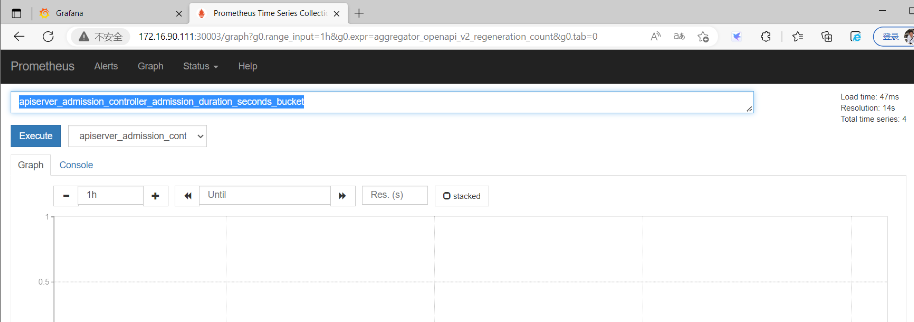

2.4.1 Prometheus访问测试

2.4.2 Grafana访问测试

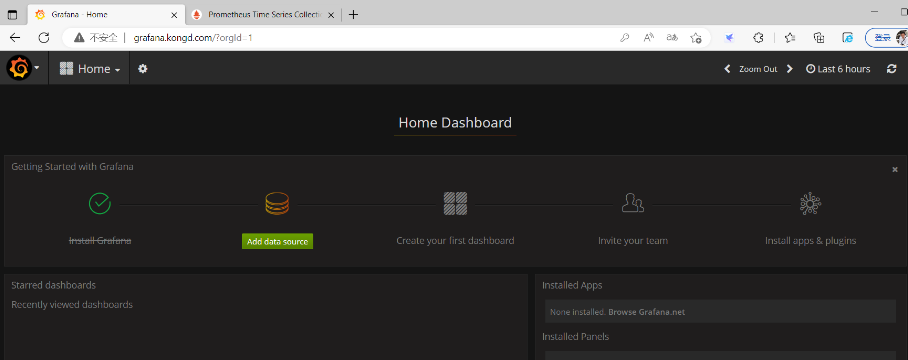

#1.通过域名访问:grafana.kongd.com,默认用户名密码:admin

#2.登录成功后出现以下界面

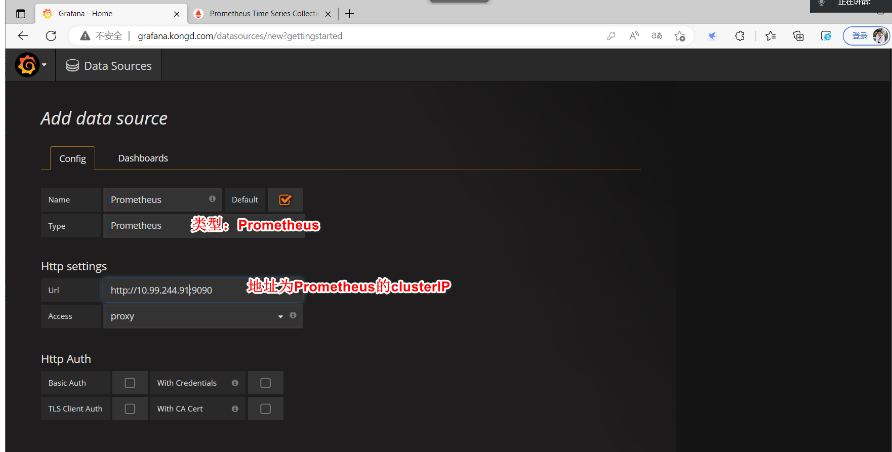

#3.配置数据源,使用promethus

#4.设置显示数据模板

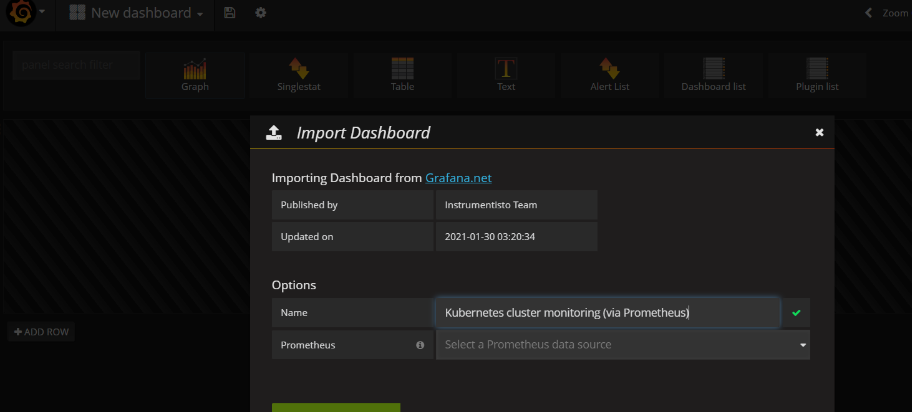

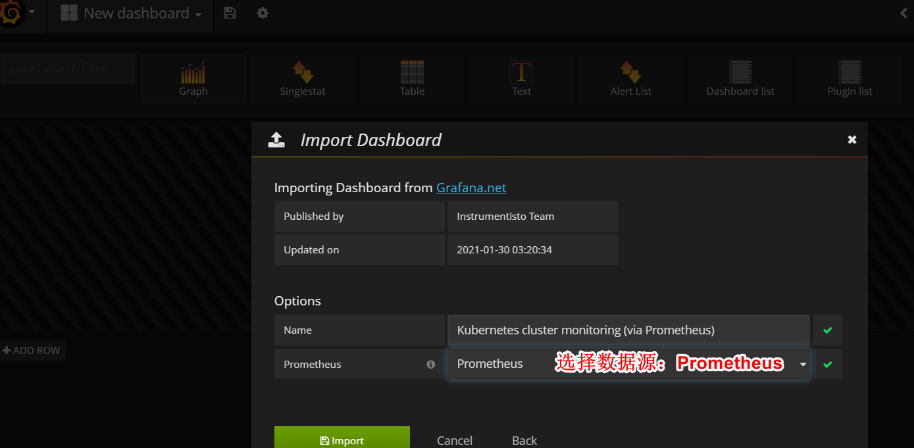

导入面板:Home->Dashboards->Import,可以直接输入模板编号315在线导入

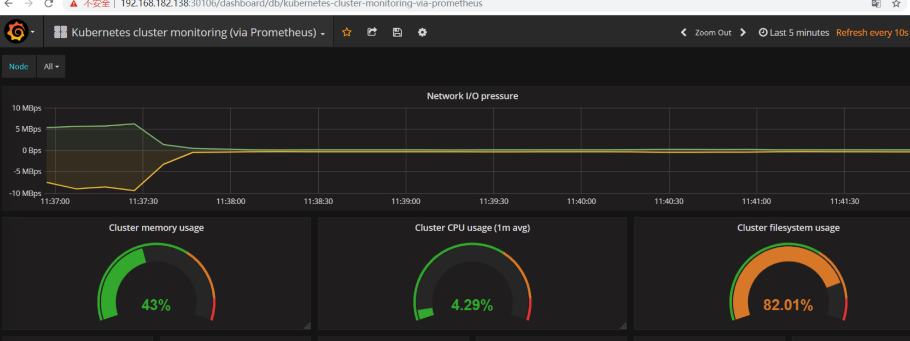

点击Import,即可查看展示效果

也可单独添加以下模块监控。

Grafana.com

• 集群资源监控:3119

• 资源状态监控 :6417

• Node监控 :9276

监控测试

部署测试实例

部署实例

[root@k8s-master k8s-prometheus-grafana]# kubectl run apache --image=httpd --replicas=2查看

[root@k8s-master k8s-prometheus-grafana]# kubectl get deployments.apps -o wide创建svc

[root@k8s-master k8s-prometheus-grafana]# kubectl expose deployment apache --port=88 --target-port=80 --type=NodePort访问:grafana.kongd.com

暂无评论内容